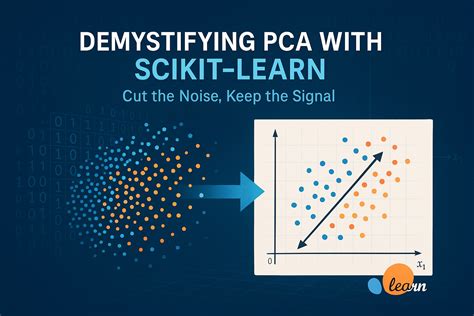

Have you ever struggled with understanding complex datasets and finding patterns in your data? Principal Component Analysis (PCA) is an essential technique in data science that can simplify your analysis, uncover hidden patterns, and make your datasets more manageable. This guide will walk you through the ins and outs of PCA using Scikit Learn, a powerful Python library, with practical, actionable advice to help you make the most of this technique. From beginners to advanced practitioners, this guide will provide you with a clear, problem-solving roadmap to leverage PCA for your data analysis needs.

Why PCA Matters

Principal Component Analysis (PCA) is a statistical method used for dimensionality reduction, which means it helps to reduce the number of random variables under consideration. By transforming a large set of variables into a smaller one that still contains most of the information, PCA simplifies data visualization, makes data processing more efficient, and can improve the performance of machine learning models. If you’re working with large, complex datasets, PCA can be your best friend. It helps to:

- Eliminate multicollinearity

- Reduce noise

- Make visualization easier

- Improve computational efficiency

Quick Reference

Quick Reference

- Immediate action item: Start by installing Scikit Learn using pip install scikit-learn if you haven’t already.

- Essential tip: For PCA in Scikit Learn, use the

PCAclass. Instantiate an object, fit it on your dataset, and apply the.transform()method. - Common mistake to avoid: Forgetting to scale your data before applying PCA. PCA is sensitive to the scales of input features, so use

StandardScaler()from Scikit Learn to standardize your dataset.

Getting Started with PCA in Scikit Learn

Before diving into the practical aspects of PCA, it’s crucial to understand the theoretical foundations. PCA finds the directions (called principal components) along which the variation in the data is maximized. These components are an ordered list in terms of how much variance they capture from the data.

Here’s a step-by-step guide to performing PCA with Scikit Learn:

Step 1: Install and Import Necessary Libraries

Start by installing Scikit Learn if you haven’t already:

- Install:

pip install scikit-learn - Import: Once installed, import the necessary libraries:

import numpy as np

import pandas as pd

from sklearn.decomposition import PCA

from sklearn.preprocessing import StandardScalerStep 2: Prepare Your Dataset

For PCA, it’s crucial to scale your dataset because PCA is sensitive to the scale of the variables. Use StandardScaler() to standardize your features by removing the mean and scaling to unit variance.

Here’s an example:

data = pd.DataFrame({'Feature1': [1, 2, 3, 4, 5], 'Feature2': [5, 4, 3, 2, 1]})

scaler = StandardScaler()

scaled_data = scaler.fit_transform(data)Step 3: Apply PCA

Now, let’s apply PCA to your dataset:

pca = PCA(n_components=2) # You can adjust the number of components

principalComponents = pca.fit_transform(scaled_data)Step 4: Interpret the Results

The principal components are stored in principalComponents. You can also retrieve other useful information:

explained_variance = pca.explained_variance_ratio_This array gives you the proportion of the variance that each principal component accounts for.

Step 5: Visualize the Components

To better understand your PCA results, you can visualize the principal components:

import matplotlib.pyplot as plt

plt.scatter(principalComponents[:, 0], principalComponents[:, 1]) plt.show()

Advanced PCA Techniques

Once you’ve mastered the basics, it’s time to explore some advanced techniques that can make your PCA analysis even more powerful.

Feature Selection with PCA

PCA can be used as a feature selection method by choosing the number of components that capture most of the variance in your dataset. This simplifies your model and can improve its performance.

Here’s how to do it:

cum_var_ratio = np.cumsum(pca.explained_variance_ratio_)

optimal_components = np.argmax(cum_var_ratio >= 0.95) + 1

pca = PCA(n_components=optimal_components)

principalComponents = pca.fit_transform(scaled_data)PCA for Time Series Data

Time series data can be complex, but PCA can help by reducing dimensionality while preserving temporal information. After standardizing your time series data, apply PCA as usual:

time_series_data = pd.DataFrame(some_time_series_data)

scaled_time_series = scaler.fit_transform(time_series_data)

pca = PCA(n_components=2)

time_series_pca = pca.fit_transform(scaled_time_series)Combining PCA with Other Techniques

PCA can be combined with other techniques such as clustering or regression to extract more meaningful insights. For example, after applying PCA, you can cluster the principal components using K-Means:

from sklearn.cluster import KMeans

kmeans = KMeans(n_clusters=3)

clusters = kmeans.fit_predict(principalComponents)Practical FAQ

How do I choose the number of components for PCA?

Choosing the number of components for PCA depends on how much variance you want to capture. A common approach is to look at the cumulative explained variance ratio. You can select the smallest number of components that explain at least 95% of the variance. This can be done by plotting the cumulative explained variance and visually inspecting where it plateaus. Another method is to use cross-validation and choose the number of components that gives the best performance on a validation set.

Can PCA be applied to categorical data?

PCA is designed for continuous numerical data. If you have categorical data, you’ll need to encode it into numerical form first, using techniques like one-hot encoding. After encoding, you can apply PCA on the transformed dataset. However, keep in mind that PCA assumes linear relationships, which might not always fit well with categorical data. In such cases, consider using techniques like t-SNE for visualization.

What happens if I don’t scale my data before applying PCA?

If you don’t scale your data before applying PCA, the results can be skewed by the scale of the variables. For example, if one feature has values in the thousands and another feature has values in the hundreds, PCA will overweight the features with larger scales. Scaling your data with StandardScaler() ensures that each feature contributes equally to the analysis, leading to more accurate and interpretable principal components.

With this guide, you should now have a comprehensive understanding of PCA and how to implement it using Scikit Learn. From the basics of scaling and applying PCA to advanced techniques like feature selection and combining PCA